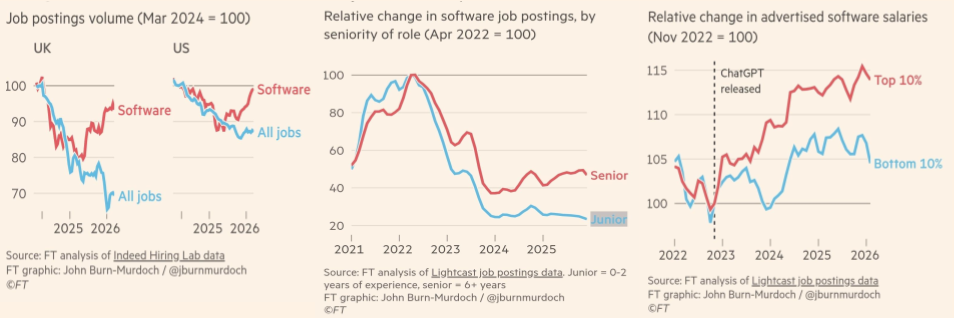

According to the FT, demand for software engineers is rising again, and in relative terms is outperforming the wider jobs market. That’s the headline most people will take away.

But the more important detail is that the growth is concentrated in more experienced roles, while entry-level hiring remains weak.

That broadly lines up with a concern I wrote about recently for Ada National College for Digital Skills: GenAI is amplifying the already existing skills gap in software engineering.

Genuinely strong, experienced engineers are already a relatively small pool. If the FT data is right, demand is increasingly being concentrated into that already constrained part of the market.

That means a supply squeeze. Salary inflation, harder hiring, slower execution, and more organisations unable to deliver on the strategy and goals they have set.

Further, as we saw in the pandemic hiring boom, shortage pressure creates title inflation and level distortion, such as mid-level people taking senior or lead roles at new companies. The result is organisations paying more for talent that is, on average, less experienced than the role implies, while carrying more execution risk and less capability depth than the org chart suggests.

If companies keep optimising away that layer, it is not just bad for the industry overall, it is economically short-sighted for the individual organisation. It increases future dependence on an already constrained and expensive part of the market, while missing the real value of junior engineers, not just what they contribute now, but the capability they become.